Robots.txt è un file che indica ai motori di ricerca quali aree del tuo sito web indicizzare.

Qual è esattamente il suo ruolo? Come creare il file robots.txt? E come usarlo per il tuo SEO?

Cos'è il file robots.txt?

Un file robots.txt è un file di testo e si trova nella radice del tuo sito web. Il suo unico ruolo è impedire ai bot dei motori di ricerca di indicizzare determinate aree del tuo sito web. Il file robots.txt è uno dei primi file analizzati dai robot (spider).

|

| ROBOT TXT |

A cosa serve?

Il file robots.txt invia istruzioni ai bot dei motori di ricerca che analizzano il tuo sito web, è un protocollo di esclusione dei robot . Con questo file puoi bloccare la scansione di:

- il tuo sito web a determinati robot (chiamati anche ' Spider ' o ' agenti ').

- Alcune pagine del tuo sito web a robot e/e alcune pagine ad alcuni robot.

Per comprendere appieno il valore di un file robots.txt, possiamo prendere ad esempio un sito web composto da uno spazio pubblico per comunicare con una intranet dedicata a dipendenti e clienti. In questo caso l'accesso all'area pubblica è consentito ai bot, mentre l'area privata è vietata.

Questo file indica inoltre ai motori di ricerca l'indirizzo del file della mappa del sito web.

Dove posso trovare il file ROBOTS.TXT?

Puoi trovare un file robots.txt al livello root del tuo sito web. Per verificare se è sul tuo sito, digita semplicemente la barra degli indirizzi nel browser come in questo esempio: "https://www.indirizzodeltuositoweb.com/robots.txt".

Se il file è:

- In assenza verrà visualizzato un errore 404. I bot considerano che non ci siano contenuti vietati.

- Presente, verrà visualizzato e i bot seguiranno le istruzioni nel tuo file.

Come creare Robots txt?

Per creare il tuo file robots.txt, devi prima essere in grado di accedere alla radice del tuo dominio.

Puoi creare manualmente il file TXT di un robot oppure puoi crearlo per impostazione predefinita dalla maggior parte dei CMS come WordPress al momento dell'installazione. Ma è anche possibile creare il tuo file utilizzando gli strumenti online, ce ne sono molti che possono sicuramente aiutarti.

Per la creazione manuale è possibile utilizzare un semplice editor di testo come Blocco note o Visual Studio Code rispettando entrambi:

- Un nome file: robots.txt.

- Istruzioni e sintassi.

- Struttura: un'istruzione per riga senza righe vuote.

Devi avere accesso FTP per accedere alla cartella principale del tuo sito web. Se non hai i permessi, purtroppo, non potrai crearlo e dovrai contattare la tua web agency o il tuo host.

Le istruzioni e la sintassi del file robots.txt

I file Robots.txt utilizzano i seguenti comandi o istruzioni:

- Consenti: consenti è un comando che consente l'accesso a un URL specifico inserito in una cartella riservata.

- Disallow: disallow è un comando che impedisce agli agenti utente di accedere a un URL o una cartella specifica.

- User-agent: gli user-agent sono robot dei motori di ricerca, ad esempio " Bingbot per Bing" o "Googlebot per Google ".

Esempio di file robots.txt:

# file per i robot del sito https://www.yourwebsiteaddress.com/

User-Agent: * (consente l'accesso a tutti i robot)

Disallow: /intranet/ (impedisce l'esplorazione del file intranet)

Disallow: /login.php (vietato esplorare l'URL https://www.yourwebsiteaddress.com/login.php)

Consenti: /*.css?* (Consenti l'accesso a tutte le risorse CSS)

Mappa del sito: https://www.yourwebsiteaddress.com/sitemap_index.xml (link alla mappa del sito per SEO)

Nell'esempio sopra, inserendo un asterisco (*) il comando User-agent si applica a tutti i crawler. Il cancelletto (#) viene utilizzato in modo che i commenti non vengano presi in considerazione dai bot.

Puoi trovare risorse per motori di ricerca e sistemi di gestione dei contenuti specifici su robots-txt.com .

Robots.txt e SEO

In termini di ottimizzazione SEO per il tuo sito web, robots.txt ti consente di:

- Invia la tua mappa del sito ai bot per fornire indicazioni precise su quali URL verranno indicizzati.

- Risparmia sul " budget di scansione " dei tuoi bot semplicemente escludendo le pagine di bassa qualità dal tuo sito web.

- Impedisci ai bot di indicizzare contenuti duplicati.

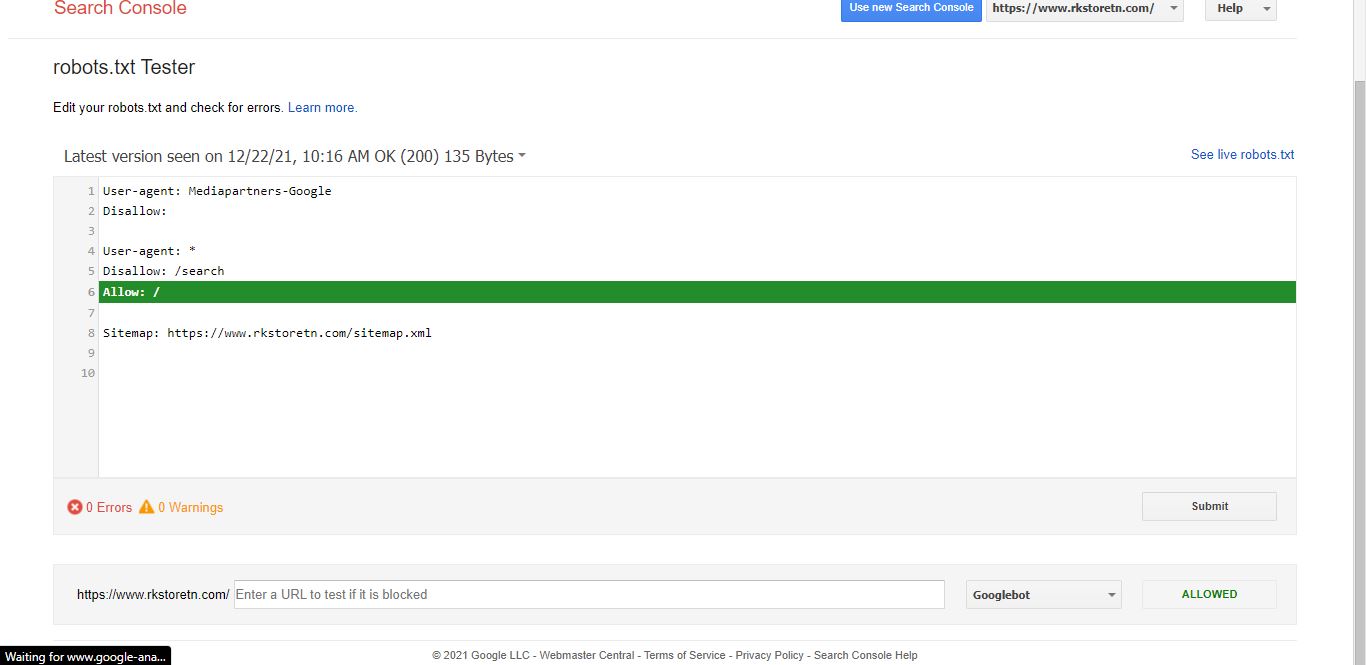

Commenta il tester del tuo file robots.txt?

Tutto quello che devi fare è creare e autenticare il tuo sito con Google Search Console per testare il tuo file robots.txt. Una volta creato il tuo account, dovrai cliccare su Esplora nel menu e poi sul file Test Tool Robots.txt .

Il test del file robots.txt verifica se gli URL più importanti possono essere indicizzati da Google e ti avvisa se si verifica un errore.

Infine, se vuoi padroneggiare l'indicizzazione del tuo sito web, è necessario creare un file robots.txt. Se non è presente alcun file, tutti gli URL trovati dai bot verranno indicizzati e li troverai nei risultati del motore di ricerca .